Docker is one of those tools I wish I had learned to use a long time ago. I still remember how painful it always was to set up Elasticsearch on Linux, or to set up both Elasticsearch and Kibana on Windows, and occasionally having to repeat this process occasionally to upgrade or recreate the Elastic stack.

Fortunately, Docker images now exist for all Elastic stack components including Elasticsearch, Kibana and Filebeat, so it’s easy to spin up a container, or to recreate the stack entirely in a matter of seconds.

Getting them to work together, however, is not trivial. Security is enabled by default from Elasticsearch 8.0 onwards, so you’ll need SSL certificates, and the examples you’ll find on the internet using docker-compose from the Elasticsearch 7.x era won’t work. Although the Elasticsearch docs provide an example docker-compose.yml that includes Elasticsearch and Kibana with certificates, this doesn’t include Filebeat.

In this article, I’ll show you how to tweak this docker-compose.yml to run Filebeat alongside Elasticsearch and Kibana.

- I’ll be doing this with Elastic stack 8.4 on Linux, so if you’re on Windows or Mac, drop the

sudofrom in front of the commands. - You can find the relevant files for this article in the FekDockerCompose folder at the Gigi Labs BitBucket Repository.

- This is merely a starting point and by no means production-ready.

- A lot of things can go wrong along the way, so I’ve included a lot of troubleshooting steps.

The Doc Samples

The “Install Elasticsearch with Docker” page at the official Elasticsearch documentation is a great starting point to run Elasticsearch with Docker. The section “Start a multi-node cluster with Docker Compose” provides what you need to run a three-node Elasticsearch cluster with Kibana in Docker using docker-compose.

The first step is to copy the sample .env file and fill in any values you like for the ELASTIC_PASSWORD and KIBANA_PASSWORD settings, such as the following (don’t use these values in production):

# Password for the 'elastic' user (at least 6 characters)

ELASTIC_PASSWORD=elastic

# Password for the 'kibana_system' user (at least 6 characters)

KIBANA_PASSWORD=kibana

# Version of Elastic products

STACK_VERSION=8.4.0

# Set the cluster name

CLUSTER_NAME=docker-cluster

# Set to 'basic' or 'trial' to automatically start the 30-day trial

LICENSE=basic

#LICENSE=trial

# Port to expose Elasticsearch HTTP API to the host

ES_PORT=9200

#ES_PORT=127.0.0.1:9200

# Port to expose Kibana to the host

KIBANA_PORT=5601

#KIBANA_PORT=80

# Increase or decrease based on the available host memory (in bytes)

MEM_LIMIT=1073741824

# Project namespace (defaults to the current folder name if not set)

#COMPOSE_PROJECT_NAME=myproject

Next, copy the sample docker-compose.yml. This is a large file so I won’t include it here, but in case the documentation changes, you can find an exact copy at the time of writing as docker-compose-original.yml in the aforementioned BitBucket repo.

Once you have both the .env and docker-compose.yml files, you can run the following command to spin up a three-node Elasticsearch cluster and Kibana:

sudo docker-compose up

You’ll see a lot of output and, after a while, if you access http://localhost:5601/, you should be able to see the Kibana login screen:

Troubleshooting tip: Unhealthy containers

It can happen that some of the containers fail to start up and claim to be “unhealthy”, without offering a reason. You can find out more by taking the container ID (provided in the error in the output) and running:

sudo docker logs <containerId>

Chances are that the error you’ll see in the logs will be this:

bootstrap check failure [1] of [1]: max virtual memory areas vm.max_map_count [65530] is too low, increase to at least [262144]

This is in fact explained in the same documentation page and elaborated in another one. Run the following command to fix it on Linux, or refer to the documentation for other OSes:

sudo sysctl -w vm.max_map_count=262144

Adding Filebeat to docker-compose.yml

The sample docker-compose.yml consists of five services: setup, es01, es02, es03 and kibana. While the documentation already explains how to Run Filebeat on Docker, what we need here is to run it alongside Elasticsearch and Kibana. The first step to do that is to add a service for it in the docker-compose.yml, after kibana:

filebeat:

depends_on:

es01:

condition: service_healthy

es02:

condition: service_healthy

es03:

condition: service_healthy

image: docker.elastic.co/beats/filebeat:${STACK_VERSION}

container_name: filebeat

volumes:

- ./filebeat.yml:/usr/share/filebeat/filebeat.yml

- ./test.log:/var/log/app_logs/test.log

- certs:/usr/share/elasticsearch/config/certs

environment:

- ELASTICSEARCH_HOSTS=https://es01:9200

- ELASTICSEARCH_USERNAME=elastic

- ELASTICSEARCH_PASSWORD=${ELASTIC_PASSWORD}

- ELASTICSEARCH_SSL_CERTIFICATEAUTHORITIES=config/certs/ca/ca.crt

The most interesting part of this is the volumes:

- filebeat.yml: this is how we’ll soon be passing Filebeat its configuration.

- test.log: we’re including this example file just to see that Filebeat actually works.

- certs: this is the same as in all the other services and is part of what allows them to communicate securely using SSL certificates.

Generating a Certificate for Filebeat

The setup service in docker-compose.yml has a script that generates the certificates used by all the Elastic stack services defined there. It creates a file at config/certs/instances.yml specifying what certificates are needed, and passes that to the bin/elasticsearch-certutil command to create them. We can follow the same pattern as the other services in instances.yml to create a certificate for Filebeat:

" - name: es03\n"\

" dns:\n"\

" - es03\n"\

" - localhost\n"\

" ip:\n"\

" - 127.0.0.1\n"\

" - name: filebeat\n"\

" dns:\n"\

" - es03\n"\

" - localhost\n"\

" ip:\n"\

" - 127.0.0.1\n"\

> config/certs/instances.yml;

Configure Filebeat

Create a file called filebeat.yml, and configure the input section as follows:

filebeat.inputs:

- type: filestream

id: my-application-logs

enabled: true

paths:

- /var/log/app_logs/*.log

Here, we’re using a filestream input to pick up any files ending in .log from the /var/log/app_logs/ folder. This path is arbitrary (as is the id), but it’s important that it corresponds to the location where we’re voluming in the test.log file in docker-compose.yml:

volumes:

- ./filebeat.yml:/usr/share/filebeat/filebeat.yml

- ./test.log:/var/log/app_logs/test.log

- certs:/usr/share/elasticsearch/config/certs

While you’re at it, create the test.log file with any lines of text, such as the following:

Log line 1

Air Malta sucks

Log line 3

Back to filebeat.yml, we also need to configure it to connect to Elasticsearch using not only the Elasticsearch username and password, but also the certificates that we are generating thanks to what we did in the previous section:

output.elasticsearch:

hosts: '${ELASTICSEARCH_HOSTS:elasticsearch:9200}'

username: '${ELASTICSEARCH_USERNAME:}'

password: '${ELASTICSEARCH_PASSWORD:}'

ssl:

certificate_authorities: "/usr/share/elasticsearch/config/certs/ca/ca.crt"

certificate: "/usr/share/elasticsearch/config/certs/filebeat/filebeat.crt"

key: "/usr/share/elasticsearch/config/certs/filebeat/filebeat.key"

Troubleshooting tip: Peeking inside a container

In case you’re wondering where I got those certificate paths from, I originally looked inside the container to see where the certificates were being generated for the other services. You can get a container ID with docker ps, and then access the container as follows:

sudo docker exec -it <containerId> /bin/bash

More Advanced Filebeat Configurations

Although we’re using simple filestream input in this example to keep things simple, Filebeat can be configured to gather logs from a large variety of data sources, ranging from web servers to cloud providers, thanks to its modules.

A good way to explore the possibilities is to download a copy of Filebeat and sift through all the different YAML configuration files that are provided as reference material.

Running It All

It’s now time to run docker-compose with Filebeat running alongside Kibana and the three-node Elasticsearch cluster:

sudo docker-compose up

Troubleshooting tip: Recreating certificates

The setup script has a check that won’t create certificates again if it has already been run (by looking for the config/certs/certs.zip file). So if you’ve already run docker-compose up before, you’ll need to recreate these certificates in order to get the one for Filebeat. The easiest way to do it is by just clearing out the volumes associated with this docker-compose:

sudo docker-compose down --volumes

Troubleshooting tip: filebeat.yml permissions

It’s also possible to get the following error:

filebeat | Exiting: error loading config file: config file (“filebeat.yml”) can only be writable by the owner but the permissions are “-rw-rw-r–” (to fix the permissions use: ‘chmod go-w /usr/share/filebeat/filebeat.yml’)

The solution is, of course, to heed the error’s advice and run the following command (on your host machine, not in the container):

chmod go-w filebeat.yml

Troubleshooting tip: Checking individual container logs

The logs coming from all the different services can be overwhelming, and the verbose JSON structure doesn’t help. If you suspect there’s a problem with a specific container (e.g. Filebeat), you can see the logs for that specific service as follows:

sudo docker-compose logs -f filebeat

You can of course still use sudo docker logs <containerId> if you want, but this alternative puts the name of the service before each log line, and some terminals colour it. This at least helps to visually distinguish one line from another.

sudo docker-compose logs -f filebeat.Verifying Log Data in Kibana

You only know Filebeat really worked if you see the data in Kibana. Fire up http://localhost:5601/ in a browser and login using “elastic” as the username, and whatever password you set up in the .env file (in this example it’s also “elastic” for simplicity).

The first test I usually do is to check whether an index has actually been created at all. Because if it hasn’t, you can search all you want in Discover and you’re not going to find anything.

Click the hamburger menu in the top-left, scroll down a bit, and click on “Dev Tools”. There, enter the following query and run it (by clicking the Play button or hitting Ctrl+Enter):

GET _cat/indices

If you see an index whose name contains “filebeat” in the results panel on the right, then that’s encouraging.

GET _cat/indices shows that we have a Filebeat index.Now that we know that some data exists, click the hamburger menu at the top-left corner again and go to “Discover” (the first item). There, you’ll be prompted to create a “data view” (if you don’t have any data, you’ll be shown a different prompt offering integrations instead). If I understand correctly, this “data view” is what used to be called an “index pattern” before.

Click on the “Create data view” button.

You can give the data view a name and an index pattern. I suppose the name is arbitrary. For the index pattern, I still use filebeat-* (you’ll see the index name on the right turn bold as you type, indicating that it’s matching), although I’m not sure whether the wildcard actually makes a difference now that the index is some new thing called a data stream.

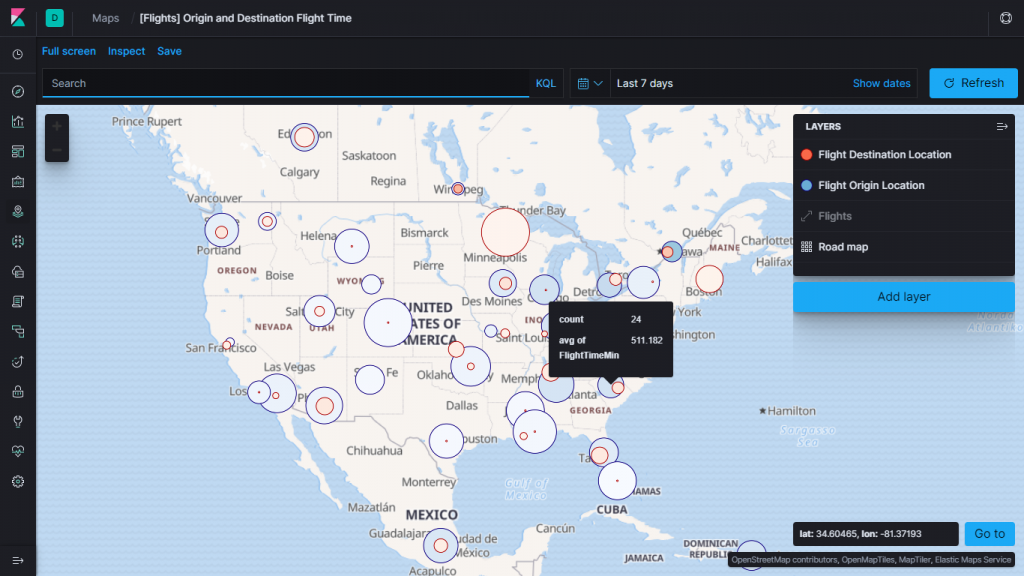

The timestamp field gets chosen automatically for you, so no need to change anything there. Just click on the “Save data view to Kibana” button. You should now be able to enjoy your lovely data.

Troubleshooting tip: Time range

If you don’t see any data in Discover, it doesn’t necessarily mean something went wrong. The default time range of “last 15 minutes” means you might not see any data if there wasn’t any indexed recently. Simply adjust it to a longer period (e.g. last 2 hours).

Conclusion

The Elastic stack is a wonderful set of tools, but its power comes with a lot of complexity. Docker makes it easier to run the stack, but it’s often difficult to find guidance even on simple scenarios like this. I’m hoping that this article makes things a little easier for other people wanting to run Filebeat alongside Elasticsearch and Kibana in Docker.